The Claim

If you’ve seen these stories lately, they often sound like this:

- “My child called crying — it was AI.”

- “A scammer cloned my voice and opened payments.”

- “Deepfake kidnappings are everywhere.”

Skeptics often respond: “That’s impossible. AI can’t sound like a real person from a small clip.”

What We Found

The core claim is true: voice-cloning scams are real, and credible authorities have issued warnings.

In the U.S., police warnings describe scammers using short audio clips from social media, voicemail greetings, or videos to create convincing voice replicas, then calling family members with urgent, emotional stories designed to extract money quickly.

In the UK, National Trading Standards described a wave of AI-assisted fraud where criminals harvest personal data and use AI-generated voice clones to simulate consent for direct debits, sometimes without victims realizing payments are being taken.

Consumer watchdog Which? also reported on this pattern, describing “lifestyle survey” cold calls used to gather details and create voice clones that enable unauthorized payments.

So yes: this isn’t just internet lore. It’s being treated as a real and evolving fraud method.

How These Scams Work (In Plain English)

Voice cloning scams generally fall into two buckets:

1) The “panic call” family scam (fast money)

This is the version U.S. police warnings often highlight:

- The caller sounds like a child/grandchild/relative.

- They claim an emergency (“accident,” “arrest,” “stuck somewhere”).

- They urge secrecy (“don’t tell mom/dad,” “stay on the phone”).

- They demand immediate payment.

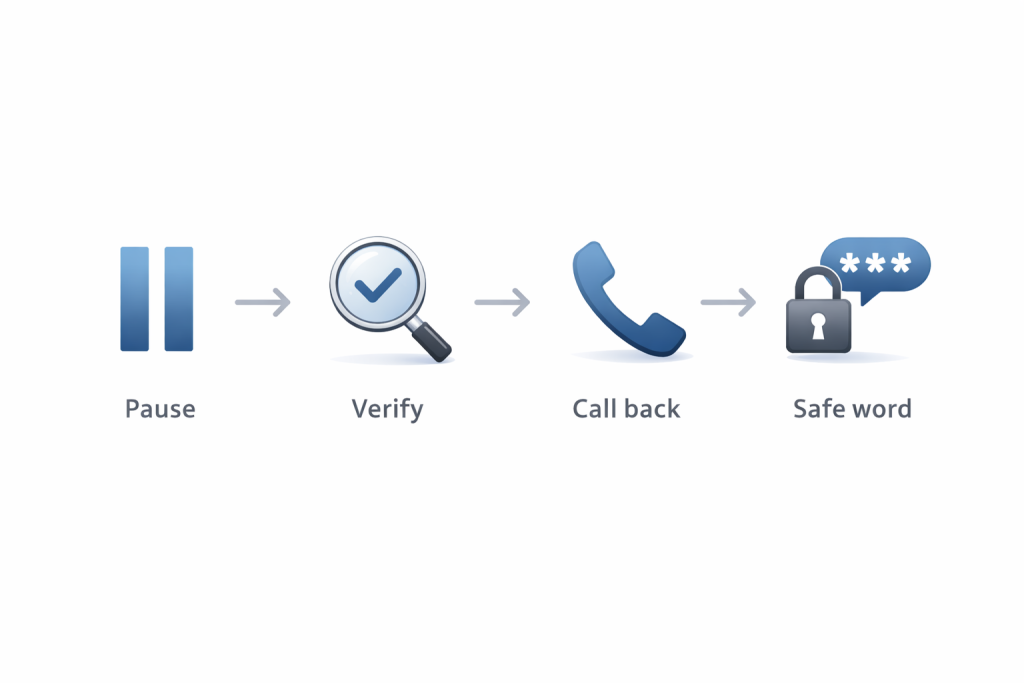

This model works because it hijacks the victim’s emotions and reduces time for verification. Police guidance explicitly recommends pausing, verifying, calling back on a known number, asking a personal question, and setting a family safe word.

2) The “consent simulation” scam (slow drain)

This is the version UK consumer protection groups described:

- It starts with a “survey” or harmless call that gathers personal details.

- The scammer uses the audio to clone a voice.

- The clone is then used to simulate consent for direct debits or financial actions.

- Victims may not notice immediately.

National Trading Standards described organized operations harvesting data and using voice clones to deceive financial providers.

Why This Is Getting Worse Now

Two forces are colliding:

- Better tools: Voice synthesis is improving and becoming cheaper.

- More raw material: People post hours of audio online (stories, vlogs, reels, voice notes, livestreams).

On top of that, experts tracking AI incidents have warned that deepfake fraud is scaling: tools are easier to use and “impersonation for profit” is increasingly common.

And European law enforcement has also warned about AI turbocharging organized crime, including scams and synthetic media abuses.

“But Wouldn’t I Notice It’s Fake?”

Sometimes yes. Sometimes no.

A modern voice clone doesn’t need to be perfect. It just needs to be believable enough during a high-stress moment. Most people aren’t listening like audio engineers when they think a loved one is in danger.

Scammers also stack the deck:

- noisy background audio

- crying or panic that masks imperfections

- urgency and interruption

- instructions that prevent verification (“don’t hang up!”)

That’s why these scams often target older relatives and caretakers — people who are more likely to respond emotionally and less likely to question the “why” behind the call.

What You Can Do (Actionable, Not Alarmist)

Set a family “safe word”

Police guidance recommends setting a family safe word used only in real emergencies.

It can be simple: a random phrase only close family knows.

Verify with a call-back

If you get a panic call:

- Hang up.

- Call your loved one back using a number already saved in your phone.

- If they don’t answer, call a second trusted contact.

Ask a question AI can’t easily fake in the moment

Example prompts:

- “What was the name of our first dog?”

- “What did we eat at (specific place) last weekend?”

- “What nickname do you call me that nobody else uses?”

Tighten what you share publicly

This doesn’t mean “go silent.” It means:

- consider limiting public access to videos featuring kids/elderly relatives

- remove or hide voicemail recordings that use full names

- review privacy settings on platforms that expose lots of audio

The Misinformation Layer: When True Stories Turn Into Hype

Because voice scams are real, opportunists exaggerate them for clicks:

- “AI kidnappings are everywhere”

- “Any call could be a deepfake”

- “Never trust voice again”

That framing isn’t helpful. The correct takeaway is:

verification beats fear. A two-step check (safe word + call-back) defeats most versions of this scam.

Verdict

True.

Police and consumer protection groups have issued real warnings describing AI voice cloning scams used for panic calls and unauthorized payment setups. The details vary by region, but the technique and threat are legitimate.